|

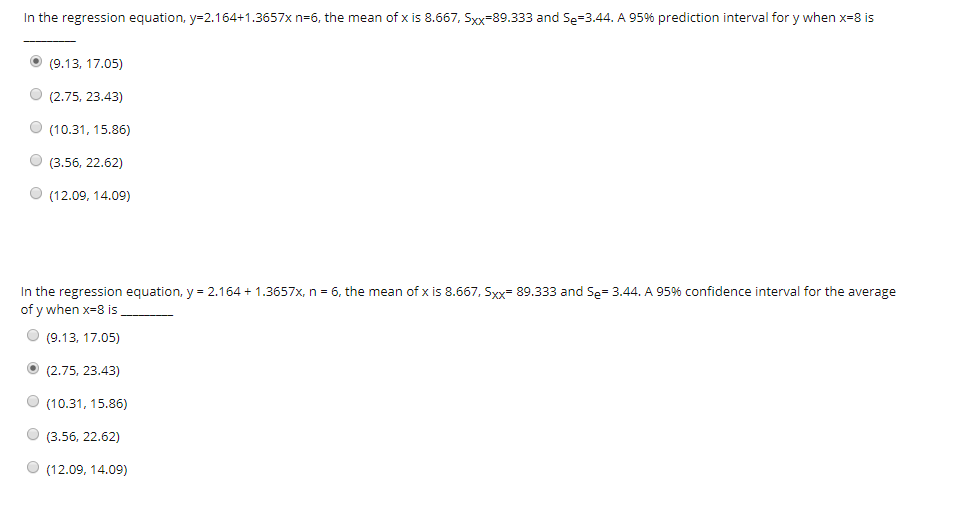

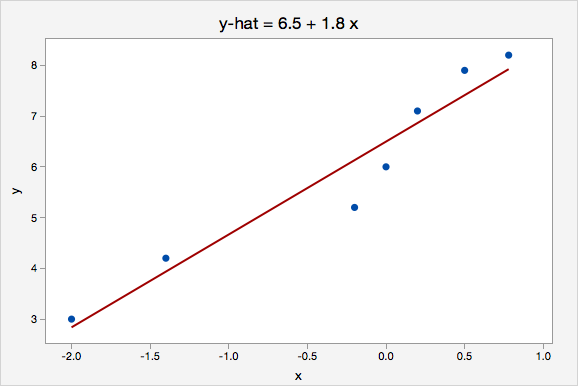

The standard error of z r is approximately:Īnd hence a 95% confidence interval for the true population value for the transformed correlation coefficient z r is given by z r - (1.96 × standard error) to z r (1.96 × standard error). To calculate a confidence interval, r must be transformed to give a Normal distribution making use of Fisher's z transformation : This additional information can be obtained from a confidence interval for the population correlation coefficient. (Fig.5 5).Ĭonfidence interval for the population correlation coefficientĪlthough the hypothesis test indicates whether there is a linear relationship, it gives no indication of the strength of that relationship. (Fig.4) 4) however, there could be a nonlinear relationship between the variables (Fig. A value close to 0 indicates no linear relationship (Fig. one variable decreases as the other increases Fig. A value close to -1 indicates a strong negative linear relationship (i.e.

one variable increases with the other Fig. A value of the correlation coefficient close to 1 indicates a strong positive linear relationship (i.e. The value of r always lies between -1 and 1. This is the product moment correlation coefficient (or Pearson correlation coefficient). Where is the mean of the x values, and is the mean of the y values. ), then the correlation coefficient is given by the following equation: In algebraic notation, if we have two variables x and y, and the data take the form of n pairs (i.e. To quantify the strength of the relationship, we can calculate the correlation coefficient. But it seems that you application is really about "predictive causality," which is exactly what the Granger causality approach is meant for.On a scatter diagram, the closer the points lie to a straight line, the stronger the linear relationship between two variables. Granger causality has been critiqued for not actually establishing causality (in some cases). Then do the same the reverse (so, now regress the past values of X and Y on X(t)) and see which regressions have significant F-values.Ī very straightforward example, with R code, is found here. Check the significance of the F-statistics for each regression. regress X(t-1), X(t-2), X(t-3), Y(t-1), Y(t-2), Y(t-3) on Y(t)Ĭontinue for whatever history length might be reasonable.Also, considering that you are dealing with time series data, it tells you how much of the history of X counts towards the prediction of Y (or vice versa).Ī time series X is said to Granger-cause Y if it can be shown, usually through a series of t-tests and F-tests on lagged values of X (and with lagged values of Y also included), that those X values provide statistically significant information about future values of Y. In other words, it tells you whether beta or gamma is the thing to take more seriously. This would help you to assess whether X is a good predictor of Y or whether X is a better of Y. Perhaps the approach of "Granger causality" might help. $\dagger$ This is a simplification but it suits the purpose of explaining how one can, and should, perform the analysis to find an optimal ratio without a regression line.

Also you could incorporate the time in a more sophisticated model to make better predictions of future values/distributions for the pair $X,Y$.

Improvements of the model can be made by using different distributions than multivariate normal. In a situation with more than two variables/stocks/bonds you might generalize this to the last (smallest eigenvalue) principle component. To see the connection between both representations, take a bivariate Normal vector:

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed